VAST lab at UCLA

The VAST lab at UCLA investigates cutting-edge research topics at the intersection of VLSI technologies, design automation, architecture, compilation, and algorithm optimization at multiple scales, from micro-architecture building blocks, to heterogeneous compute nodes, and scalable data centers. Adobe Acrobat XI Pro allows you to create, view and edit files in Portable Document Format (PDF). Current focuses include architecture and design automation for efficient general intelligence customizable domain-specific computing with applications to multiple domains, such as deep learning, satisfiability solving, and large-scale data processing, image processing.

The greatest online casino games, payouts and bonuses in Canada can be found at JackpotCity.

Latest News

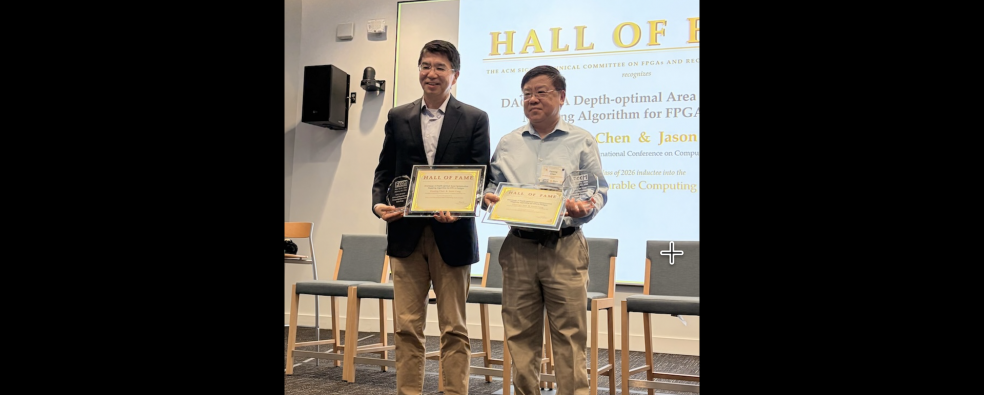

The paper by Jason and his former student Deming Chen (now the Abel Bliss professor at UIUC) entitled DAOmap: a depth-optimal area optimization mapping algorithm for FPGA designs was inducted into the FPGA and Reconfigurable Computing Hall of Fame on May 15, 2026 at the IEEE FCCM’2026...

Wan-Hsuan’s thesis “Compilation and Architecture Design for Quantum Computing” focuses on design automation for quantum computing, developing scalable compilation tools and advancing architecture–software co-design to bridge the gap between rapidly evolving quantum hardware and limited compiler...

Prof. Jason Cong received the Pender Award from the School of Engineering and Applied Science at the University of Pennsylvania on March 18, 2025 “for groundbreaking contributions in field-programmable gate arrays and electronic design automation”. Established in 1972, the Pender Award,...

Latest Publications

Our Projects

Project Description:

Domain-specific accelerators (DSAs) have shown to offer significant performance and energy efficiency over general-purpose CPUs to meet the ever-increasing performance needs. However, it is well-known that the DSAs in field-programmable gate-arrays (FPGAs) or...

Our lab focuses on advancing quantum compilation techniques to enhance the efficiency and scalability of quantum computing. We focus on Quantum Layout Synthesis (QLS), developing optimal and heuristic methods for mapping quantum algorithms onto hardware, including reconfigurable...

Heterogeneous computing with extensive use of accelerators, such as FPGAs and GPUs, has shown great promise to bring in orders of magnitude improvement in computing efficiency for a wide range of applications. The latest advances in industry have led to highly integrated heterogeneous hardware...

Direction 1: Real-Time Neural Signal Processing for Closed-Loop Neurofeedback Applications.

The miniaturized fluorescence microscope (Miniscope) and the tetrodes assembly are emerging techniques in observing the activity of a large population of neuros in vivo. It opens up new research...

In the Big Data era, the volume of data is exploding, putting forward a new challenge to existing computer systems. Traditionally, the computer system is designed to be computing-centric, in which the data from IO devices is transferred and then processed by the CPU. However, this data movement...

Summary

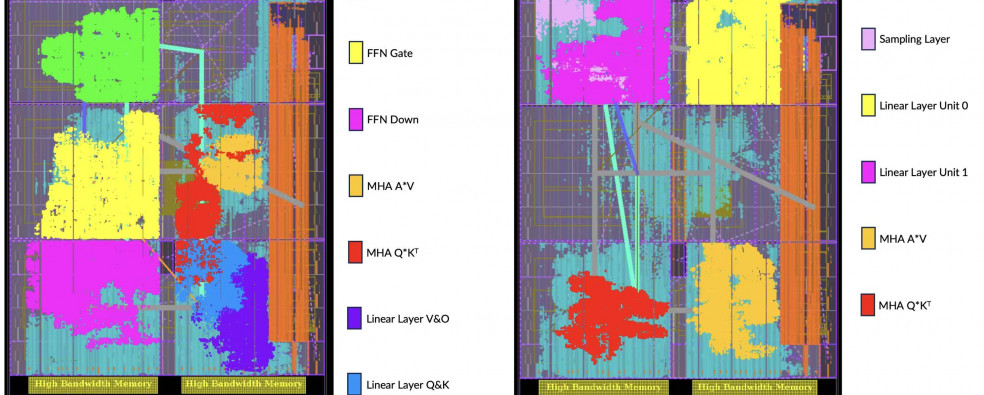

In this project, we focus on improving the efficiency of large and small language models and potentially extend to general deep neural networks for other applications. In terms of efficiency, we believe there are three key metrics to pay attention to:

...In the era of big data, many applications present siginificant compuational challenges. For example, in the field of bio-infomatics, the computation demand for personalized cancer treatment is prohibitively high for the general-purpose computing technologies, as tumor heterogeneity...

To meet ever-increasing computing needs and overcome power density limitations, the computing industry has entered theera of parallelization, with tens to hundreds of computing cores integrated into a single...

Software Releases

https://github.com/UCLA-VAST/EBMF This project provides SMT solving method and a heuristic, row packing, for the exact binary matrix factorization (EBMF) problem. Additionally, we provide an SMT method to find fooling set size of a binary...

Optimal Layout Synthesizer of Quantum Circuits for Dynamically Field-Programmable Qubits Array. https://github.com/UCLA-VAST/DPQA